How Simple Facial Drawings Are Bypassing AI-Powered Age Verification Systems

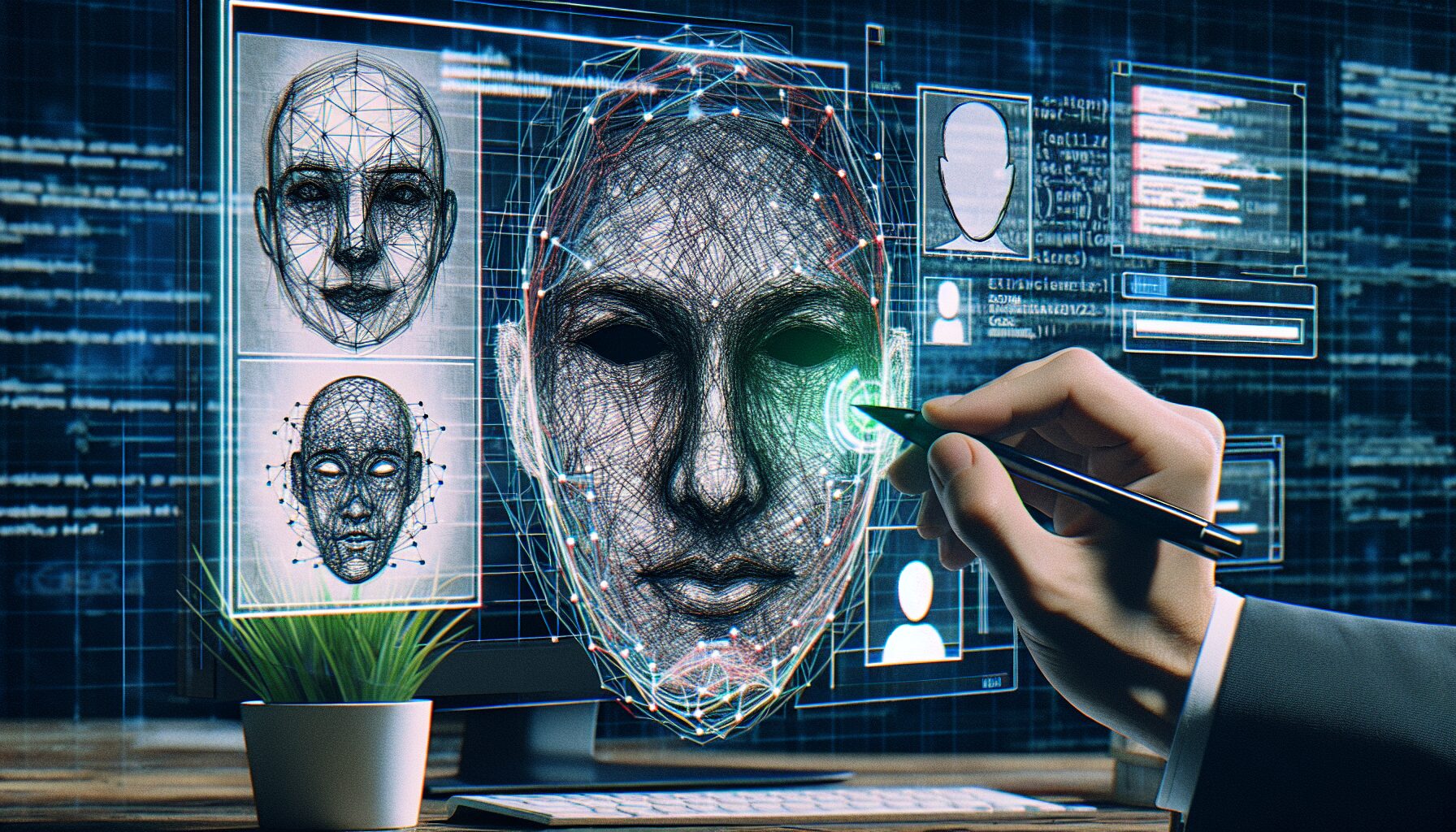

The rapid deployment of automated age verification technology has created a false sense of security among platforms attempting to protect minors from age-restricted content. However, a troubling discovery reveals significant vulnerabilities in these systems: children are successfully deceiving sophisticated facial recognition algorithms using nothing more than hand-drawn illustrations on their faces.

The Growing Problem with Digital Age Verification

Social media platforms and content streaming services increasingly rely on machine learning-based gadgets and software solutions to verify user age before granting access to restricted material. These systems represent a major innovation in content moderation, utilizing biometric scanning and facial analysis to determine whether users meet minimum age requirements. Yet researchers and cybersecurity experts are sounding alarms about fundamental weaknesses in these protective barriers.

The technology behind these verification systems typically involves analyzing facial features, bone structure, and other biometric markers to estimate age. Companies have invested heavily in this innovation, believing computational analysis could provide reliable age gating. What they didn’t anticipate was how easily the systems could be fooled by deliberate, simple alterations to facial appearance.

Understanding the Vulnerability: Why Drawings Work

The exploit itself is remarkably straightforward. Young users apply hand-drawn facial features—particularly exaggerated mustaches, beards, and other distinguishing marks—directly onto their faces before submitting photos or video for age verification. The artificial additions create sufficient visual noise and anomalies that confuse the underlying machine learning models, causing them to either misclassify age or fail to properly analyze facial structures altogether.

How Machine Learning Models Get Confused

Artificial intelligence systems trained to recognize age patterns rely on specific datasets containing images of faces at various life stages. When presented with non-standard visual input—such as drawings obscuring natural facial features—these models struggle to apply their learned patterns. The technology, while sophisticated, lacks the contextual reasoning humans instinctively use to distinguish between genuine and artificial facial alterations.

The Broader Cybersecurity Implications

This vulnerability extends beyond simple age verification. It highlights a critical gap in biometric security that impacts broader cybersecurity frameworks. If facial recognition systems can be defeated with such elementary methods, it raises questions about the reliability of biometric authentication across multiple application domains, from financial technology to access control systems.

Industry Response and Current Solutions

Technology companies and startups specializing in identity verification are scrambling to address these vulnerabilities. Some platforms have begun implementing additional verification layers, including live video submissions with real-time analysis, behavioral biometrics, and cross-referencing with government-issued identification. However, each solution presents its own challenges regarding privacy, accessibility, and user friction.

Established gadget manufacturers and software developers are investing in more robust machine learning models trained specifically to detect deliberate attempts to obscure facial features. Yet this approach becomes increasingly difficult as the cat-and-mouse game escalates between those designing verification systems and those seeking to circumvent them.

The Innovation Challenge for Content Platforms

Protecting minors from inappropriate content remains a legitimate responsibility for digital platforms. However, the current approach of relying primarily on automated facial recognition presents fundamental limitations. The startup ecosystem focused on age verification technology must balance security effectiveness against practical usability and privacy considerations.

Forward-thinking companies are exploring hybrid approaches combining multiple verification methods rather than depending on single-point solutions. These include behavioral analysis, device fingerprinting, cross-platform data validation, and integration with trusted third-party identity providers.

Regulatory Pressure and Compliance

Governments worldwide are intensifying scrutiny of how platforms protect children online. Recent legislation in Europe, the United Kingdom, and various US jurisdictions demands increasingly sophisticated age verification mechanisms. This regulatory landscape has spurred innovation in the identity verification sector, with companies developing more advanced technology to meet compliance standards while maintaining user privacy.

However, regulators must simultaneously acknowledge the inherent limitations of purely technological solutions. No software system can guarantee perfect age verification without implementing measures that compromise privacy or create barriers to legitimate user access.

Looking Forward: The Future of Age Verification

The discovery that simple drawings defeat advanced verification technology serves as a critical wake-up call for the industry. Rather than treating age verification as a solved problem, technology stakeholders must acknowledge ongoing challenges and invest in continuous improvement cycles.

The path forward likely involves combinations of biometric analysis, behavioral verification, and integration with official identity systems. As innovation continues, maintaining balance between protection and privacy will remain essential.

Conclusion

The ease with which young users can circumvent modern age verification technology underscores the complexity of digital safety in an increasingly connected world. While facial recognition represents legitimate technological innovation, this vulnerability reveals the limitations of relying solely on automated biometric systems for age gating. As platforms, regulators, and security experts collaborate to develop more robust solutions, the fundamental lesson remains clear: cybersecurity requires layered defenses and continuous adaptation rather than single-point interventions. The coming years will determine whether the technology industry can develop verification systems that effectively protect minors while maintaining user privacy and accessibility.

Frequently Asked Questions

How are young people circumventing age verification systems?

Users apply hand-drawn facial features, particularly exaggerated mustaches and beards, directly onto their faces before submitting photos for age verification. These artificial additions create visual anomalies that confuse the underlying machine learning models, causing them to either misclassify age or fail to properly analyze facial structures.

Why do simple drawings fool sophisticated AI systems?

Artificial intelligence models trained to recognize age patterns rely on specific visual datasets and learned patterns of facial features. When presented with non-standard visual input like drawings obscuring natural features, these models struggle to apply their training. The technology lacks the contextual reasoning humans instinctively use to distinguish genuine from artificial facial alterations.

What are platforms doing to fix this vulnerability?

Technology companies are implementing additional verification layers including live video submissions with real-time analysis, behavioral biometrics, device fingerprinting, and cross-referencing with government-issued identification. The most effective approaches combine multiple verification methods rather than depending on single-point facial recognition solutions.